Contents

- Introduction

- Related Work

- Motivations

- Network Architectures

- Description Languages

- Presentation

- Plan (outdated)

Abstract

We are about to propose a technique describing how to effectivelly train a neural network given an image to produce a formal description of that given image. The basic approach of the proposed technique is an intention to design a neural network capable of transforming sensoric data (static image, but possibly also sound, video; generaly a phenotype) to a code (formal expression, i.e. syntactic tree of a program), such that the code/expression/program (a.k.a. genotype) evaluates to a value that is similar with the input sensoric data, ideally identical.

TLDR: The goal is to construct a neural network that “sees the code”.

The sourcecode of the project will gradually appear in the TFGPy repository (now the i2c specific code is in the i2c.py file).

Preliminary experiment seems to have nice results (PDF). We used a simple convolution network with residual connections as encoder of images and classical recurrent decoder for generating expressions in the prefix notation.

Introduction

I too See: Images to Code.

Input domain $I$ : Inputs are Images. We want to use convolutions to “parse” them, as cats or monkeys do. So, if $i \in I$, then $i$ is an image.

Ouput domain $C$ : Our neural networ will output a code $c$. A code $c \in C$ is a syntactic tree of a program expression describing a specific image $i$. We can evaluate a code $c$ by our hand crafted render function $R$, such that: $$c \in C \implies R( c ) = i \in I$$

The key to this whole idea lies in uderstanding the role of the function $R$ as a provider of sematics. We define R to help us.

Render function $R$ : A render function $R$ may be given by a $\Gamma$ set, we donete this fact by writing $R_\Gamma$. $\Gamma$ is a library represented as a collection of symbols, where each symbol $s$ has:

- an implemetation $R_s$,

- and a type $\tau_s$.

We plan to design our network to be a kind of recurrent classificator (“into symbols”) with ability to iteratively zoom deeper into the image based on attention mechanism. What we really want to do is to compute an approximation of $R^{-1}$: We want to construct an iverse of the render function. So listen up:

Dataset $D$ : $D$ is a collection of $(i, c)$ pairs such that $i = R( c )$, where $i$ is input image and $c$ is the desired output code for the input image $i$. Colecttion $D$ serves as training and testing dataset for the network. We obtain these data samples by automatically generating $c$ codes. The generated learning dataset $D$ should obey one of the following “Occam Razor” constraints:

Sharp Razor:

- (a) A specific image $i$ is at most once in $D$,

- (b2) For each $(i, c) \in D$ holds that $c$ is the shortest possible code such that $R(c ) = i$.

A practical approximation of the Sharp Razor:

- (a) same as (a) in the Sharp Razor.

- (b1) If two codes $c_1, c_2$ such that $R(c_1) = i = R(c_2)$ are generated for $D$, keep the shorter one (i.e. keep the one described with less symbols).

TODO: Mention type system stuff approprietly in the introduction.

Related Work

Papers:

- Show, Attend and Tell: Neural Image Caption Generation with Visual Attention: Using NN to describe images in natural languages using ConvNets and Attention mechanism.

- Neural Machine Translation by Jointly Learning to Align and Translate: Seminal work on Attention mechanism for NNs.

- Sequence to Sequence Learning with Neural Networks: Seq2Seq by Google using two LSTM networks.

- Attention Is All You Need: Seq2seq by Google using only Attention, dispensing with recurrence and convolutions entirely.

- Neural Programmer: Inducing Latent Programs with Gradient Descent

Blogs:

- Attention and Augmented Recurrent Neural Networks: Among other interesting things, mentions Neural Prgrammer, a very related idea to our one.

- Neural Networks, Types, and Functional Programming

Motivations

TODO: Reorganize these ideas to more fluent and logical text. E.g. start with the Phenotype to Genotype introduction. And, as we now have an intution of Genotype and Phenotype general features, give a motivation for choosing images as Phenotype/Input domain and code as Genotype/Output domain.

Motivation for the choice of the input domain

Why is it a good idea to take images as an input domain?

Becauce ConvNets are a successful invention capable of analysing visual data and outputing sequence outputs: Describing images in natural languages using neural networks works, so it should also work using more rigid language (than a natural one) as an output domain such as formal languages.

Motivation for the output domain in particular

(i.e. with respect to the input domain “images”)

Why is it a interesting task to search fot a image description (code, expression, program) that can be rendered as a specific image?

ConceptArt to FinalProduct argument

Imagine that you have a vido game (e.g. PacMan) with DSL for description of Levels. Imagine also that you have a level sketch image drawn by a concept artist, a game designer, or user. You can transform the sketch into your formal description (e.g. your internal level data file format) using i2c.

Other example for image input:

- Control: agent’s trajectory -> program controlling movement

- 2d photo(s) -> 3d model

Analogical examples on other input domains:

- Sound: recorded sound -> musical notes

- Video: raster video movie.avi -> vector animation

- Video: rotating_apple.avi -> 3d apple model

- Control: agent’s behavior (generalized trajectory, e.g. a stream of observable features (in a vector)) -> program controlling movement

Phenotype to Genotype Argument

Other possible nicknames:

- Appearance to Substance

- Form to Meaning

- External Presentation to Internal Structure: (vnejsi-projev 2 vnitrni-struktura)

Generalization but maybe more, in a sanse, inverse of the ConceptArt to FinalProduct.

Wikipedia describes form as:

Form is the shape, visual appearance, or configuration of an object. In a wider sense, the form is the way something is or happens.

My description: external appearence (observable shape and behavior).

My note: Not to be confused with substantial form.

maybe better formulation: Substance vs. Appearance

Phenotype generalizations/synonyms:

- Manifestation

- Presentation

TODO: More motivations follow, write down explanations

- Compression argument: …

- Test capabilities and limitations of NNs arg: jakože složitá, rekurzivni/fraktalni struktura zda jde pochytit necim spis intuitivnim jako je nn

- formalni jazyk aspiruje na veci konkretnost: zirafa v lese pasuje na spoustu obrázků (je to spis typ nez hodnota), zatimco formal img description se vykresli jednim jedinym zpusobem. tedy je to i lip objektivne testovatelný.

- A nejen to, že můžeme generovat učicic data, my můžeme generovat i pomocná učící data jako jsou sugested attentions. Zkratka máme nad obrázky velkou controlu/moc a je na nás jak ji šikovně využijem.

- Tim ze deskriptivni obrazky odpovidaj vektorovym obrazkum (myšleno img-terminologii, opakna od rastrovych) jde udelat přirozeně zoomování s “nekonecnou” presnosti. To umoznuje jednak pouzit nejaky novel zpusob rekurentniho kroku, ale i to ze vstupni dimenze muze byt pomerne mala, pokud implementujem nejaky efektivne pouzitelny mechanizmus zoomu.

- vstupni data lze “nekonecne” generovat

- formalni jazyk muze rangovat pres ruzny pozice spektra abstrakce: bod, cara, plocha, na, stůl, strom

Motivation for the choice of output domain in general

(i.e. without respect to the input domain “images”)

Current methods for approaching the program synthesis task are based mainly on searching in discrete spaces. (Example of program synthesis methods: GP, MCTS, maybe mention also: ILP, CSP). If we manage to learn a neural network to generate code, … tak má to nový “spojitý aspekt”:

- Faze uceni probiha ve spojitem a diferencovatelném prostoru. Tento prostor (i.e. prostor transformatorů z fenotypu/hodnoty do genotypu/výrazu) je o jednu uroven abstrakce vys nez prostor prohledavani prostoru genotypu. Proto platí, že pokud se NN naučí tak, ze je schopna generalizovat, je výstup vytvoren na základe puštěni site, kde se neprohledava.

- neformalne: To co se uci jde chapat jako intuici/vhled a to pasuje na tu spojitost.

Network Architectures

Just some notes, for now:

- simple root symbol classifier (must be balanced, may benefit from inclusion of variety of colors or many repetition of W and B examples) with zooming

- multiple inputs, eg original image, heat map of the attention/zoom, zoomed section

- multiple networks, each one for a different output size, possibly network predicting the size. This may possibly result in simple architectures.

Description Languages

One of the key features of formal languages is their hierarchical nature. They are perfectly suited for describing objects with hierarchical structure. Hierarchical structure provides natural ways to describe visual self-similarty as we know it from mathematics (fractals) and nature (trees, leaves, snowflakes, feathers, rivers, lightning, lungs, coastlines, mountains, clouds, Nautilus shells, Romanesco broccoli, …).

An exemplary piece of a hierarchicaly structured object is a tree (the living structure in a forest), with a benefit of being a visually stunning example. Visually speaking, simplified trees are composed of simple parts: branches and leaves. These parts are composed into a hierarchy. Hierarchy is naturally describable as a tree (the syntactic graph structure). A living tree can by desscribed by a syntactic tree. Their structures overlap each other. (TODO picture)

Let me brainstorm, we humans use two basic represent

Symbols

- Types

Numerical paprameters

Todo

solution1: kazdej symbol muze mit svoji sit pro urceni svejch ciselnejch parametru. Attentions jde zkusit obdobně.

Various symbol families

- F1: Symbol family1: Image Splitting Operations.

- F2: Symbol family2: BoxesOnBoxes. Both F1&2 na to jdou skrz obdelniky, liší se v tom jak přistupují ke stromovosti.

- F3: Symbol family3: vykresleni xy fce F3 má v sobě materialovej vajb, jeden si muze predstavit ze (Fill Red) jakozto listova cast F1 (obdobne pro vybarveni krabice v F2) je hodne simple pripad toho vo zvladne F3. Muzeme rict, ze F1 a F2 jsou vic high level, nebo dle 3D terminologie ze zajistujou model, kterej pak F3 pokreje materiálem.

Family 1: Image Splitting Operations

$\Gamma_1 = \{ h : I^2 \rightarrow I , v : I^2 \rightarrow I ,q : I^2 \rightarrow I ,Black: I , White: I \}$

What is $I$?

We want to describe the type $I$ more informativelly, i.e. define $I$ as I.

We can start with S:

S = PxPos -> Color

S is a simple version of $I$, where:

PxPos = (X,Y)

Color = (R,G,B)

Hint: S may also stand for Shader.

If we want to take into consideration the zoom, then:

I = Zoom -> S = Zoom -> (PxPos -> Color)

where

Zoom = (Pos,Pos)

i.e. Zoom is (TopLeftPos,BottomRightPos)

h … a horizontal split operation

h : I^2 -> I

h : (Zoom -> S)^2 -> Zoom -> S

h (i1,i2) z =

let (z1,z2) = split_h z

in merge_h (i1 z1) (i2 z2)

h is given by (split_h, merge_h), the rest is general (e.g. same for v, and with a little bit of generalization also for q).

Let

fsym : I^k -> I

then

split_fsym : Z -> Z^k

merge_fsym : S^k -> S

Note: Zoom is very similar to Attention; Zoom is a kind of discrete attention.

Should Pos = PxPos ??

- (Pos should be Float if we want Pos = PxPos.)

- Approach1: PxPos for any zoom goes throug whole domain with steps depending on Resolution, i.e. idependently of a specific zoom.

- Approach2: S is “the core”, Zooming is done just by calling appropriet PxPos given by zoom.

- Q: Clearly, Approach1 is sometimes more suitable (e.g. fill a zoomed area with circle). Are ther more suitable cases for Approach2 also? A: App2 is more sutable if we want to fill difrently sized areas (zooms) with a repeating pattern. Can we generalize them and see what is the core of the diff?

- Q: Is there a bi-transformation between those two approaches? A: I am sure there is!

- The situation is more fine grained, viz zelenej sešítek z 9.4.18.

Dataset $D$

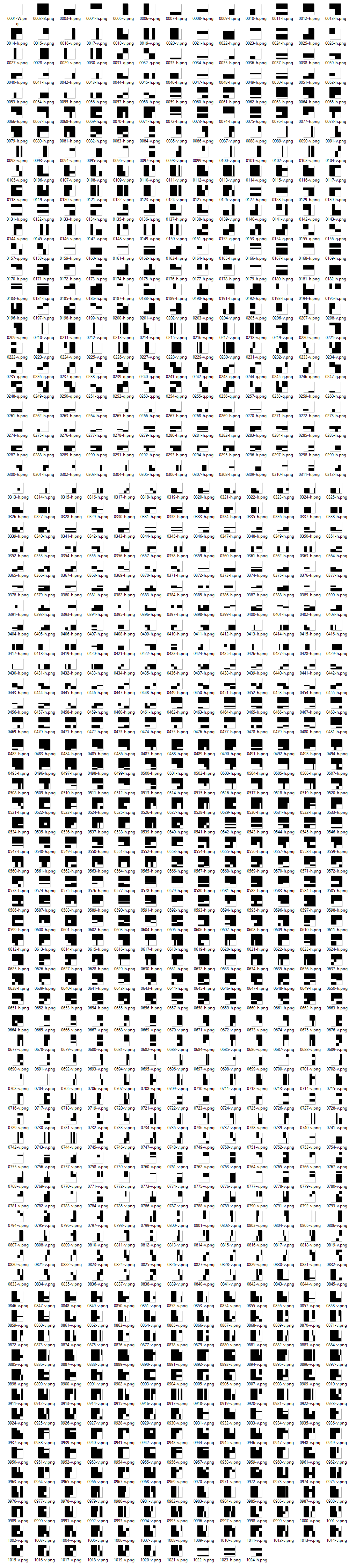

First 1024 images in $\Gamma_1$:

Presentation Abstract

Image to Code

We present a technique describing how to effectively train a neural network given an image to produce a formal description of the given image. The basic motivation of the proposed technique is an intention to design a new tool for automatic program synthesis capable of transforming sensory data (in our case static image, but generally a “phenotype”) to a formal code expression (i.e. syntactic tree of a program), such that the code (from evolutionary perspective a “genotype”) evaluates to a value that is similar to the input data, ideally identical.

Our approach is partially based on our technique for generating program expressions in the context of typed functional genetic programming. We present promising results evaluating a simple image description language achieved with a deep network combining convolution encoder of images and recurrent decoder for generating program expressions in the sequential prefix notation and propose possible future applications.

Plan (outdated)

Done

- Základně naštudovat related works

- Basic render, co umi $\Gamma_1 = \{ h : I^2 \rightarrow I , v : I^2 \rightarrow I ,q : I^2 \rightarrow I ,Black: I , White: I \}$, případně libovolný barvy.

- Už mám rozběhanej generator a udelaný jednoduchý vstupni data. Vstupni data jsou udělaný tak, že splňují Rough Razor pomocí obrazkove hashe, ted používám pHash: Generuju od nejmensiho kodu po nejvetsi a jakmile narazime na obrazek se stejnou hashí, tak vezmem ten menší (čili zahodime ten novy vetsi, neb generujem od nejmensiho).

Next

- Pak zacit basic NN trenovani. Chtel bych postupovat postupne, aby se to účelně prozkoumalo. (* Jakoze ne hned trenovat finalni sit ale jit po castech aby se lip prozkoumalo jakou volit architekturu a mit do toho vhled, pac opacne se obavam by se dostavilo sklamani typu “proc to sakra nefunguje”. Navic to umoznuje moznost prijit s nakym vic novel approachem co pasuje na dany problem.)

- Prvni co mi příde strategický skusit je čistě klasifikátor kořenovýho symbolu a ozkoušel to na obrázkách s relativně malym kódem. S tim že to bude nějaká varianta konvoluční sítě. Todle je si myslim základ aby to vůbec mohlo fungovat.

- Až se to povede odladit na nakou prijatelnou akurátnost, tak je cas na dalsi krok. Toto lazeni bych videl na dvou urovnich: lazeni architektury sítě a lazení samotného jazyka. Lazeni jazyka imo neni podvod, neb nemame ho dopredu dany - cilem je vubec takovy jazyk najit. Metriky nad kvalitou jazyků ale jsou: napr muzem merit vyjadrovaci silu jazyka, tedy jak velkou podmnozinu ze vsech obrazku jsme schopni takovym formalizmem vyjádřit. Dalsi potencialne dulezitou casti teto faze je “symetry handling”, tedy odfiltrovávání kódů, ktere ten samý obrázek popisují primarne krkolomnejší cestou než jiný kod, sekundárně klidně stejně krkolomně ale vybereme presto jednu variantu jako normalni formu, aby jsme šli síti naproti v tom, že struktura kodu bude předvidatelnější. Az budem mit jazyk, se kterym jsme spokojeni po hledisku metriky a akuratnosti, je cas na dalsi krok.

- Dalsim imo vhodnym krokem je ozkouseni vznikle site na dalsi symbol family, pokud mozno ortogonalni k te prvni, ale stale jednoducha. Otazka je jestli do toho kriteria jeste nepribrat vhodnost z pohledu naslednych pokusu s atenšnama: jakoze o neco ortogonalnejsi se mi zda F2 nez F3; family xy-funkci (F1), ale u nich nemam promysleny tolik atensny jako u kostek-na-kostkach (F2) family. ta navic ma na vrch v podobnosti (obdelniky). Po rozbehani na druhy family je cas na dalsi krok.

- Tim by melo myslim bejt ziterativneni celeho procesu. U F1 se nabízí využit faktu “že známe attention”: tedy že pro danej symbol víme kam zazoomovat a znova zavolat sit. U F1 to muzem naprogramovat ručně natvrdo.

Experiment ideas

- Zavodit jestli mensi kod najde naucena neuronka nebo evoluce nebo treba MCTS.